Hi all,

I created a job plugin for Kettle for file sending to Amazon S3. You can download this plugin here. It is based on Jets3 toolkit (jets3t-0.7.2). You can download the plugin HERE.

Below is the plugin GUI, as well as an example and you can also see the log output.

The plugin needs :

- Access Key : Your S3 access key. You must have a S3 Account.

- Private Key : Your private key. This key won’t be displayed in the Kettle log output.

- S3 Bucket : A bucket is like a directory. It must be existing.

- Filename : The path and filename for the file you want to send to S3.

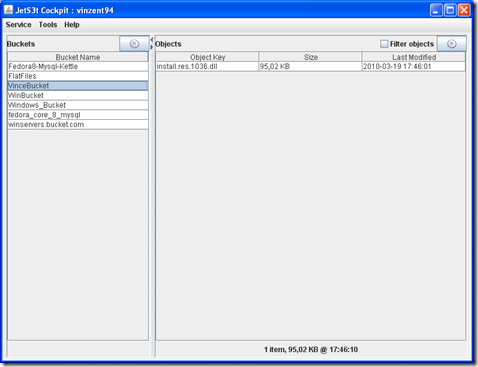

Once pushed in Amazon S3, you can see your file in the target bucket (here, a stupid win dll was sent).

And, this is the job icon.

I will soon add some new features like : bucket creation in the UI, bucket listing, xml parsing for S3 return code and maybe encryption.

Feel free to contact me.

13 comments:

Nice information for sending files through Amazon S3, but my favorite is for sending file is option in google chat. I have used it daily.

Will you be updating the plugin to work with Kettle 4.4 ?

Hi,

I'm working on it !

So much things to do and so little time ...

Thanks for your comment

Vincent

Hi Vincent,

Came across your post. I am currently working on copying files to S3 in ETL job. I am working with 5.2. Any suggestions?

Thank you,

Irina

Hi Vincent,

I am using kettle 4.4 and I need to send the file from local to s3 bucket.I have seen your comment that you are working on it.if it completed I need the referrence how to use the plug_in in kettle 4.4.

Hi Vincent,

I am in need of using your plug-in for sending a file to s3 bucket.I have seen the comment that you have working for kettle 4.4.so if it get completed I need a reference that how to use a plug-in in kettle 4.4.

im facing some issue while dropping the step into view "pdi.jobentry.SendToS3.JobEntrySendToS3 cannot be cast to org.pentaho.di.trans.step.StepMetaInterface".Can any one help me out please?

even im facing same issue"pdi.jobentry.SendToS3.JobEntrySendToS3 cannot be cast to org.pentaho.di.trans.step.StepMetaInterface".Can any one help me out please?

even im facing same issue"pdi.jobentry.SendToS3.JobEntrySendToS3 cannot be cast to org.pentaho.di.trans.step.StepMetaInterface".Can any one help me out please?

HI Vincent,

It sounds promising, but version pdi-5 is already out somewhere. Have you upgraded the plugin or do you know an alternative?

Thank you for this plugin but unfortunately we work with pentaho version 5 and this APi doesn't match with the current version of Pentaho.

Can you please provide us a update version of your API.

Thank you

Is there a way in Pentaho to get a list of files from S3 and copy them to local drive? Thanks

Is there a way in Pentaho to get a list of files from S3 and copy them to local drive? Thanks

Post a Comment